Table of Contents

An exploration of the rise of platform engineering, why it’s become essential in modern software organizations.

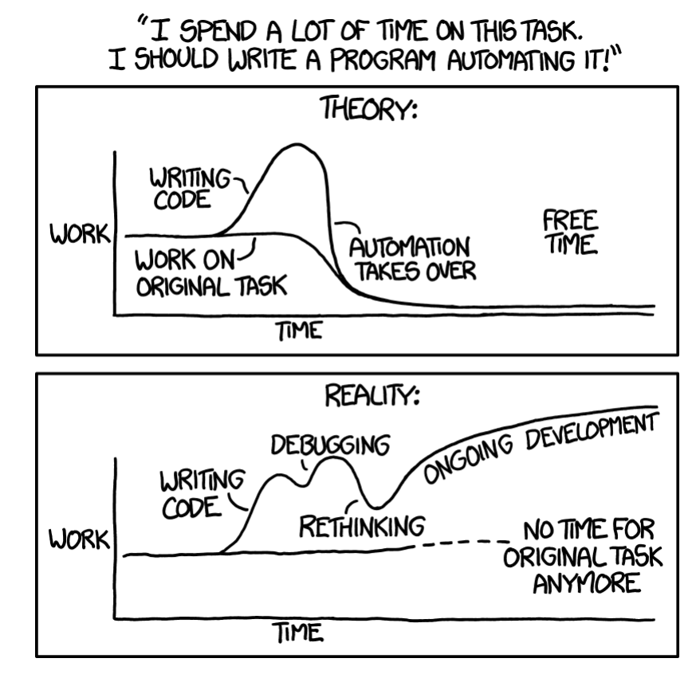

Modern cloud-native delivery has created a compounding tax on engineering velocity. Teams that once owned a monolith and a single deployment script now navigate 15–30 discrete toolchain components across a typical microservices stack, container registries, service meshes, secret managers, policy engines, pipeline orchestrators, telemetry backends, and multiple cloud provider SDKs before a single line of business logic ships to production.

This is not a tooling problem. It is a systems integration problem. Each tool is individually justified, often excellent at its job, but the cognitive cost of composing them correctly and consistently across dozens of engineering teams is where velocity goes to die. The symptom is developer toil: engineers spending 20–40% of their time on non-differentiating infrastructure concerns.

Platform engineering emerged as the structural response. Its premise is straightforward: build internal platforms that treat shared infrastructure concerns as a product, not a shared responsibility. But the execution gap between that premise and a functioning Internal Developer Platform (IDP) is wide, and the failure modes are well-documented.

This guide goes beyond the conceptual. It covers the architectural decisions, toolchain choices, anti-patterns, and measurement frameworks that separate platforms that accelerate delivery from platforms that replicate the centralized-IT bottlenecks DevOps was meant to dissolve.

What Platform Engineering Actually Is

Platform engineering is the practice of building and operating internal platforms that enable developers to deliver software efficiently. Instead of each team assembling its own tooling and infrastructure, a platform team provides a curated set of services, workflows, and abstractions that developers can consume through APIs, templates, or developer portals.

At its core, platform engineering treats infrastructure and tooling as a product for internal users. Developers are the customers, and the platform is designed to reduce friction in common tasks such as provisioning environments, deploying services, managing dependencies, and operating applications in production.

The Internal Developer Platform (IDP)

An IDP is not a portal, a wiki, or a ticketing system with a new name. It is a

self-service, API-driven abstraction layer over your infrastructure, security controls, and operational tooling. Developers interact with the platform through interfaces, CLIs, APIs, or a web UI and receive running, compliant, observable software environments in return.

The IDP consists of five planes:

Plane | Responsibility | Example Tooling |

|---|---|---|

Developer Control Plane | Interface through which developers consume platform capabilities | Backstage, Port, Cortex, custom CLI |

Integration & Delivery | Standardized CI/CD pipelines, artifact promotion gates | Tekton, GitHub Actions, Argo CD, Flux |

Monitoring & Observability | Centralized metrics, logs, traces, alerting defaults | Prometheus, Grafana, OpenTelemetry, Datadog |

Security & Identity | Secrets management, RBAC, image scanning, policy enforcement | Vault, OPA/Gatekeeper, Falco, AWS IAM |

Resource Provisioning | Infrastructure lifecycle, quota management, multi-cloud abstraction | Crossplane, Terraform, Pulumi, AWS Service Catalog |

Difference from DevOps and SRE

Platform engineering builds on the foundations of DevOps and SRE but represents a shift in focus.

DevOps emphasized collaboration between development and operations, encouraging shared ownership of delivery pipelines and infrastructure. SRE introduced reliability practices, focusing on uptime, observability, and operational excellence.

Platform engineering takes the next step by productizing these capabilities. Instead of expecting every team to implement DevOps practices independently, platform teams provide standardized solutions, golden paths, reusable components, and automated workflows that embed best practices by default.

The distinction matters because conflating these practices leads to structural confusion about team ownership.

Dimension | DevOps | SRE | Platform Engineering |

|---|---|---|---|

Primary Concern | Delivery speed, collaboration | Reliability, SLO management | Developer productivity at scale |

Ownership Model | Shared ownership between Dev & Ops | SRE embeds in product teams | Dedicated platform product team |

Key Output | Cultural practices, pipelines | Runbooks, SLIs/SLOs, error budgets | Self-service APIs, golden paths, IDP |

Scaling Model | Doesn't scale without standardization | Scales via automation & runbooks | Scales by reducing team-level variability |

Failure Mode | Re-siloed as teams grow | Becomes a reliability bureaucracy | Becomes a bottleneck if platform-as-gatekeeper |

The shift is from individual tooling to curated platforms. Rather than asking developers to stitch together infrastructure, the platform provides a consistent and opinionated way to build and operate systems.

Core Goals

The primary goals of platform engineering are:

Self-service: Developers can provision resources and deploy applications without relying on ticket-based processes.

Standardization: Common patterns are enforced through templates and workflows, improving consistency and security.

Reduced cognitive load: Developers focus on business logic instead of navigating complex infrastructure.

When executed well, platform engineering transforms fragmented tooling into a cohesive experience, enabling teams to move faster without sacrificing reliability or control.

Why Platform Engineering Is Rising Now

Platform engineering isn’t emerging in a vacuum; it’s a direct response to how complex modern software development has become. As organizations adopt cloud-native architectures, microservices, and specialized tooling, the operational surface area for developers has expanded dramatically.

Toolchain Fragmentation

Today’s developers don’t just write code; they orchestrate systems. A typical workflow may involve source control, CI/CD pipelines, container orchestration, infrastructure-as-code, monitoring platforms, security scanners, feature flags, and multiple cloud services. Each tool solves a specific problem, but together they create a fragmented experience.

This fragmentation increases context switching and cognitive load. Engineers spend more time figuring out how to do things than actually building features. Documentation becomes scattered, workflows inconsistent, and ownership unclear. Over time, productivity suffers not because of a lack of capability, but because of a lack of cohesion.

Scaling Challenges

As teams grow, these problems compound. What works for a small team quickly breaks down at scale. Different teams adopt different tools, patterns, and deployment strategies. Variability increases, and with it, friction.

Without standardization, onboarding slows down. Engineers must learn not just the system, but the unique way each team operates. Dependencies between services become harder to manage, and operational risk increases. Platform engineering addresses this by introducing opinionated defaults that reduce variability without eliminating flexibility.

Business Drivers

Beyond technical concerns, platform engineering is driven by clear business needs. Organizations are under constant pressure to deliver features faster, maintain high reliability, and meet growing compliance requirements.

Platform teams embed these requirements into the system itself:

Faster time-to-market through reusable workflows and automation

Improved reliability via standardized observability and deployment practices

Compliance and security are built into the infrastructure and pipelines

This shifts responsibility from individual teams to shared systems, reducing the likelihood of errors and inconsistencies.

The Platform as a Productivity Multiplier

Ultimately, platform engineering transforms internal infrastructure into a productivity multiplier. Instead of each team solving the same problems repeatedly, the platform provides reusable solutions that scale across the organization.

When done well, it enables engineers to focus on what matters: delivering value to users, while the platform handles the complexity behind the scenes.

Platform Engineering as an Enabler

At its best, platform engineering acts as a force multiplier for developer productivity. Abstracting complexity and standardizing common workflows allows teams to move faster without sacrificing reliability or security. The key is not removing flexibility, but providing better defaults.

Golden Paths and Paved Roads

One of the most impactful patterns in platform engineering is the concept of golden paths opinionated, pre-approved workflows for common use cases. Instead of asking teams to design deployment pipelines, infrastructure patterns, or service templates from scratch, the platform provides ready-to-use scaffolding.

These paved roads embed best practices by default:

Secure configurations and access controls

Integrated CI/CD pipelines

Standard logging, monitoring, and alerting

Consistent deployment patterns

This dramatically reduces setup time and eliminates repetitive decision-making. Teams can spin up new services quickly while staying aligned with organizational standards.

Service Catalogs and Discovery

As systems grow, visibility becomes a major bottleneck. Engineers often struggle to answer basic questions: Who owns this service? What does it depend on? Where is it deployed?

Platform engineering addresses this through service catalogs centralized inventories of services, APIs, and infrastructure components. These catalogs provide:

Clear ownership and team responsibility

Dependency relationships between systems

Links to dashboards, documentation, and runbooks

By making the system discoverable, platform teams reduce reliance on tribal knowledge and improve collaboration across teams.

Self-Service Infrastructure

A defining feature of an effective platform is self-service capability. Developers should be able to provision environments, configure pipelines, manage secrets, and access observability tools without relying on ticket-based processes.

This doesn’t eliminate governance; it embeds it into workflows. Policies, quotas, and security controls are enforced automatically behind the scenes. The result is a system where developers can move quickly while staying within safe boundaries.

Self-service infrastructure shifts platform teams from gatekeepers to enablers, removing bottlenecks and freeing engineers to focus on building.

Developer Experience as a Differentiator

Ultimately, platform engineering is about improving developer experience (DevEx). When onboarding is faster, workflows are consistent, and tools are easy to use, engineers spend less time navigating systems and more time delivering value.

This has tangible outcomes:

Reduced onboarding time for new hires

Increased deployment frequency

Higher developer satisfaction and retention

In competitive engineering environments, DevEx is not just an internal concern; it’s a strategic advantage. Organizations that invest in well-designed platforms empower their teams to ship faster, with greater confidence and less friction.

Platform Engineering as a Potential Bottleneck

Platforms fail when abstractions are designed for the platform team's operational convenience rather than the developer's mental model. A common manifestation: abstracting Kubernetes resources into a proprietary YAML DSL that is neither Kubernetes-compatible nor portable, requiring developers to learn a new configuration language with no ecosystem support.

The Platform Abstraction Fitness Test: a good abstraction reduces the number of decisions a developer must make while preserving their ability to reason about what will actually happen in production. If a developer cannot predict the behavior of a production incident from the platform's abstraction layer, the abstraction is too thick.

Anti-Pattern: Building a 'magic' deployment system where developers cannot understand what Kubernetes resources are created, what IAM roles their service runs with, or why their pod was evicted. Over-abstraction that removes visibility is indistinguishable from opacity. |

Adoption Failure: Platform as Shelfware

The most reliable signal that a platform has failed is low adoption. Engineers will bypass, fork, or route around a platform that creates more friction than it removes. This manifests as:

Teams maintaining private Terraform modules that duplicate platform functionality

Engineers SSHing into CI runners to debug problems that the platform's abstraction obscures

Separate deployment pipelines per team because the platform's pipeline is too opinionated for edge cases

Slack channels are full of platform workaround tips that newer engineers never discover

The root cause is almost always building features based on assumptions about developer needs rather than validated pain. Platform teams embedded in infrastructure are not embedded in product delivery workflows; they do not feel the friction they are supposed to remove.

Fix: Embed platform engineers in product teams for 2-week rotations. Run structured friction logs: ask engineers to record every time they do something that feels like it should be automated or standardized. Map these logs to platform backlog items. Prioritize ruthlessly by frequency and time cost.

The Distributed Monolith Problem

A poorly designed platform creates a distributed monolith: all teams are coupled to the platform's release cadence, and a platform bug or breaking change takes down multiple teams simultaneously. This is the centralization risk realized.

Platform teams should apply the same engineering standards to their own APIs that product teams apply to external ones: semantic versioning, changelog maintenance, deprecation notices, and migration tooling. The platform is not special infrastructure it is a product with a contractual interface.

Rebuilding the Old IT Bureaucracy

The structural risk that is hardest to avoid: as platform teams grow, they acquire the organizational gravity of a centralized IT function. Headcount justification incentivizes building more features. Feature growth increases the maintenance surface. Maintenance burden leads to slower response times. Developers begin routing requests through tickets again.

The organizational check on this is a platform product manager who operates a

platform cost model: every feature built by the platform team has a measured cost (build + maintain) and a measured benefit (developer hours saved, incidents prevented, compliance violations avoided). Features whose cost exceeds their benefit are candidates for deprecation, not expansion.

Building a Platform That Works

The difference between platform engineering as an enabler versus a bottleneck comes down to how it is built, operated, and evolved. The most successful organizations approach platforms not as internal infrastructure projects, but as products with real users, clear value, and continuous iteration.

Phase 1: Identify and Baseline the Real Bottlenecks

The first mistake is starting with tooling. Before selecting a portal framework or writing a single Helm chart, baseline where developer time actually goes. The mechanism:

Developer time audit: Instrument CI/CD systems to measure time from commit to production-ready artifact. Break down by stage: build, test, security scan, approval gates, deployment, smoke tests.

Friction log sprint: One-week exercise where every engineer records every manual step, every wait, every context switch required to ship a change. Aggregate into a frequency/severity matrix.

Incident retrospective mining: Review the last 90 days of incident retrospectives for recurring toil items, manual rollback procedures, missing runbooks, and inconsistent alerting thresholds. These are platform product requirements.

DORA metrics baseline: Measure Deployment Frequency, Lead Time for Changes, Mean Time to Recovery, and Change Failure Rate per DORA methodology before any platform investment. You cannot demonstrate ROI without a baseline.

This produces a ranked list of developer pain points with frequency and cost data. Build the first version of the platform around the top three items only.

Phase 2: The Minimum Viable Platform

A Minimum Viable Platform (MVP) is not a portal with five empty pages; it is a narrow, working solution to a specific, high-frequency problem. Common candidates:

Platform Capability | Value Delivered | Realistic Build Time (Small Team) |

|---|---|---|

Standardized CI pipeline template | Eliminate 15+ hours/service of pipeline setup; enforce security gates | 2–4 weeks |

Service scaffolding CLI (golden path) | Service bootstrap from days to minutes; consistent repo structure | 3–6 weeks |

Self-service environment provisioning | Eliminate ticket-based infra requests; dev/staging on-demand | 4–8 weeks |

Centralized secrets management | Eliminate hardcoded credentials; auto-rotation; audit trail | 2–4 weeks |

Internal service catalog (read-only) | Reduce 'who owns this?' escalations; dependency visibility | 2–3 weeks |

Principle: Ship the MVP for one capability and measure adoption before building the next. A platform with 80% adoption of two capabilities outperforms a platform with 15% adoption of ten. |

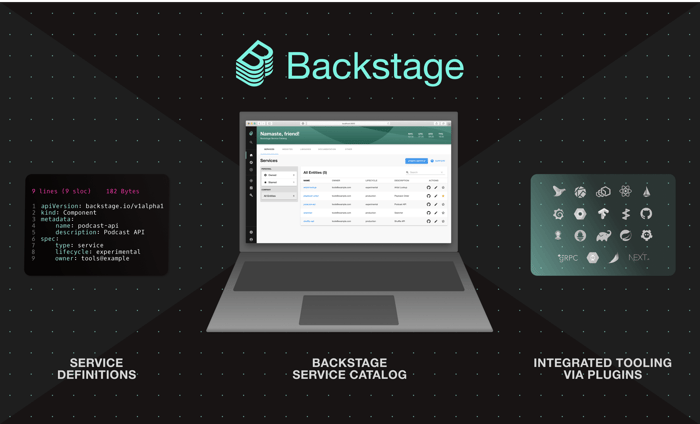

Phase 3: The Developer Portal

Backstage (CNCF) is the de facto standard for developer portal infrastructure. It provides a plugin-based framework that aggregates service catalog data, documentation, CI/CD status, cloud cost, and security findings into a single developer-facing interface. It is not a product; it is a framework that requires significant investment to configure, extend, and maintain.

The critical Backstage implementation decisions that determine whether it becomes essential infrastructure or shelfware:

Catalog ingestion strategy: Auto-discover existing services from GitHub/GitLab org scanning rather than requiring a manual catalog.yaml registration. Adoption drops sharply when registration is manual.

Plugin selection discipline: Backstage has 100+ community plugins. Install only plugins backed by a team committed to maintenance. Unmaintained plugins become compatibility blockers on core Backstage upgrades.

TechDocs integration: Configure MkDocs-based documentation to render directly in Backstage from the service repo. Engineers who can view docs, runbooks, and architecture diagrams alongside service health in one interface actually use the portal.

Search indexing: The value of Backstage scales with catalog completeness and search quality. Invest in indexing API specs, runbooks, and ADRs (Architecture Decision Records) from the start.

Phase 4: Measuring What Matters

Platform ROI is measured in developer outcomes, not platform features shipped. The metrics that matter:

Metric | What It Measures | Collection Method | Target Direction |

|---|---|---|---|

Deployment Frequency | How often teams ship to production | CD system event logs | Increase |

Lead Time for Changes | Commit to production time | Git commit timestamp → deployment event | Decrease |

Platform Adoption Rate | % of teams using golden paths vs. custom | CI/CD pipeline tag analysis | Increase |

Time to First Deploy (New Service) | Golden path effectiveness | Service creation to first prod deployment | Decrease |

Developer NPS (platform-specific) | Perceived value and friction | Quarterly 5-question survey | Increase |

P50/P95 Pipeline Duration | CI/CD efficiency | Pipeline timing data | Decrease |

Mean Time to Onboard (new engineers) | Documentation and scaffolding quality | HR + engineering survey | Decrease |

Compliance Violation Rate | Policy enforcement effectiveness | OPA audit logs, security scan failures | Decrease |

Report these metrics in a platform health dashboard accessible to all engineering leadership. Transparency about platform performance, including metrics that are moving in the wrong direction, builds credibility and surfaces problems before they compound.

Toolchain Reference: Build vs. Buy vs. OSS

Platform teams face recurring build/buy/adopt decisions across the IDP capability surface. The following reference captures the current state of the ecosystem for each major platform concern. These are not endorsements; the right choice depends on your existing toolchain, team expertise, and scale requirements.

Platform Capability | OSS Options | Commercial Options | Build-Your-Own Signal |

|---|---|---|---|

Developer Portal | Backstage (CNCF), Gitea | Port, Cortex, OpsLevel | Never. Portal is a commodity; differentiate via plugins |

CI/CD Pipelines | Tekton, Argo Workflows, GitHub Actions, GitLab CI | CircleCI, Buildkite, Harness | Only for highly specialized hardware requirements |

GitOps / CD | Argo CD, Flux CD | Harness GitOps, Codefresh | Rarely .Argo CD/Flux cover 95% of use cases |

Infrastructure Provisioning | Crossplane, Terraform, Pulumi, OpenTofu | Env0, Spacelift, Terraform Cloud | When cloud provider APIs not covered by existing providers |

Secrets Management | HashiCorp Vault (OSS), External Secrets Operator | Vault Enterprise, AWS Secrets Manager, Azure Key Vault | Never. Security-critical tooling warrants proven implementations |

Policy Enforcement | OPA/Gatekeeper, Kyverno | Styra DAS, Snyk | For domain-specific policy logic on top of OPA |

Observability | Prometheus, Grafana, OpenTelemetry, Jaeger | Datadog, New Relic, Dynatrace, Honeycomb | Rarely. Telemetry ingestion/storage is expensive to operate |

Service Mesh | Istio, Linkerd, Cilium | Solo.io, Tetrate | Never for core mesh; extend with custom Envoy filters if needed |

Cost Note: The observability row warrants attention: running a self-hosted Prometheus/Thanos/Grafana stack at scale (>500 services, >5M metrics/s) has significant operational cost. Many organizations underestimate the engineering overhead and migrate to managed observability after the fact. Factor this into the initial architecture decision. |

Organizational Design: The Platform Team

Team Topology and the Platform Team Model

Team Topologies (Skelton & Pais) formalizes what successful platform organizations have learned empirically: the platform team is a stream-aligned team enabler, not a centralized ops function. The distinction determines almost everything about how the team operates.

A platform team operates as:

A product team with a backlog, roadmap, and product manager

An internal service provider with SLOs for the platform itself

An X-as-a-Service interface to stream-aligned product teams minimal cognitive load imposed on consumers

It does not operate as:

An approval body for infrastructure changes

An on-call rotation for product team infrastructure incidents (platform team is on-call for the platform; product teams are on-call for their services)

A consultancy that embeds in teams to do work product teams should own

Sizing and Composition

There is no universal ratio, but a practical guideline: one platform engineer can effectively support 8–15 product engineers at steady state, assuming the platform is handling high-frequency, standardized use cases. Edge cases and custom requirements increase this burden.

Minimum viable platform team composition:

Role | Responsibility | Anti-Pattern to Avoid |

|---|---|---|

Platform Engineer (2–3) | Build and operate IDP capabilities, golden paths, and automation | Becoming a ticket-based request fulfilment function |

Platform Product Manager (1) | Roadmap, developer feedback loops, adoption metrics, stakeholder alignment | PM role filled by the tech lead loses user empathy focus |

Developer Experience (1, at scale) | Docs, onboarding, developer surveys, friction reduction | Treating DevEx as a nice-to-have until adoption fails |

Security Enablement (embedded or liaised) | Policy-as-code, security golden paths, vulnerability response | Security team as an external gatekeeper rather than a platform contributor |

The Adoption Flywheel

Platform adoption follows a network-effect pattern: the more teams use the platform, the more feedback improves it, which increases adoption. The inverse is also true. Kickstarting the flywheel requires a deliberate early adopter strategy:

Identify 1–2 willing early adopter teams: ideally, teams that frequently experience the pain points the platform addresses. Do not mandate platform adoption initially.

Co-build the first golden path with the early adopter team present. Their requirements drive the implementation.

Measure and publicize wins: when the early adopter ships 60% faster with the platform, that data is the most effective internal marketing.

Let organic adoption drive expansion: teams that see adjacent teams moving faster will seek out the platform. Forced adoption without demonstrated value creates resentment.

Conclusion

Platform engineering is neither inherently an enabler nor a bottleneck; it is defined entirely by how it is executed. The same platform can accelerate delivery or slow it down, depending on whether it prioritizes developer experience or organizational control.

When approached with product thinking and developer empathy, platform engineering becomes a true force multiplier. It reduces cognitive load, standardizes best practices, and empowers teams to ship faster with confidence. It creates leverage across the organization, turning complexity into a managed advantage.

Without that mindset, however, platforms risk becoming yet another layer of bureaucracy: rigid, underutilized, and disconnected from the needs of the teams they are meant to serve.

The distinction is simple but critical: platforms succeed when developers choose to use them, not when they are forced to.

The future belongs to organizations that build platforms developers want to use intuitive, valuable, and continuously evolving alongside the teams they support.

Akava would love to help your organization adapt, evolve and innovate your modernization initiatives. If you’re looking to discuss, strategize or implement any of these processes, reach out to bd@akava.io and reference this post.